Showing China the banhammer

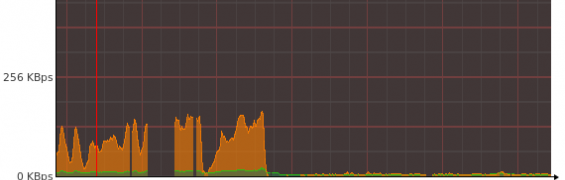

Recently I started noticing a spike in the overall bandwidth on my server. A little bit of investigation revealed some interesting albeit confusing details.

First contact

Towards the end of last month's billing cycle for my server I realised I'd actually overshot my bandwidth cap by a little bit. This wasn't anything unusual as I was always pushing it in previous months but decided to keep an eye on it at the start of the next month.

Two days into the next billing cycle and I'd used up half of my available bandwidth - oh dear, something is definitely wrong.

Investigation

I figured the bandwidth spike could be due to a number of things:

- My website is suddenly crazy popular and generating huge amounts of traffic - optimistic

- Someone is hotlinking images on my site to somewhere else really popular - unlikely

- My server has been hijacked and is being used as a reverse proxy - I hope not

- Spammers are attempting to post comments an inordinate number of times - possible

- There's an error in the reporting - unlikely

So to narrow down the options, I employed the assistance of MammothVPS's performance page, CloudFlare, AWStats, netstat, and IPTraf. I was able to see the huge amounts of traffic were on port 80 and at a fairly consistent rate of 250kb/s-300kb/s for the past few days. A couple of netstat commands allowed me to see that the majority of apache's workers were connected to by a limited number of IPs.

Further perusal of the awstats pages for my server displyed both the large spike and the narrow range of IPs from which the requests originated.

The first thing to notice is that most of the requests in the top 10 list come from either 120.43.0.0/16 or 27.159.0.0/16. From this, it doesn't take much to realise the origin was China and the Chinanet Fujian Province Network. What was also interesting was how ALL of the requests from this rather distributed attack were for a non-existent page on my site - another indication of malicious intent.

Unfortunately, with around 80000 pages visited and 230KB delivered for the 404 response of each request, there was an excess of bandwidth used, 17.5GB.

Dealing with it

The simplest method of blocking all these requests was not by adding the IPs to the banlist in Drupal (a rather ineffective method of restricting access), rather to add them to iptables or to my CDN/optimiser, CloudFlare.

A number of the following commands:

iptables -A INPUT -s 120.43.0.0/16 -j DROPwith all of the different ranges on both my server and CloudFlare configuration pages really quietened things down and I have to consider myself far less popular now as I've not got nearly the same volume of bandwidth per day.

Bye bye 400000 IPs!